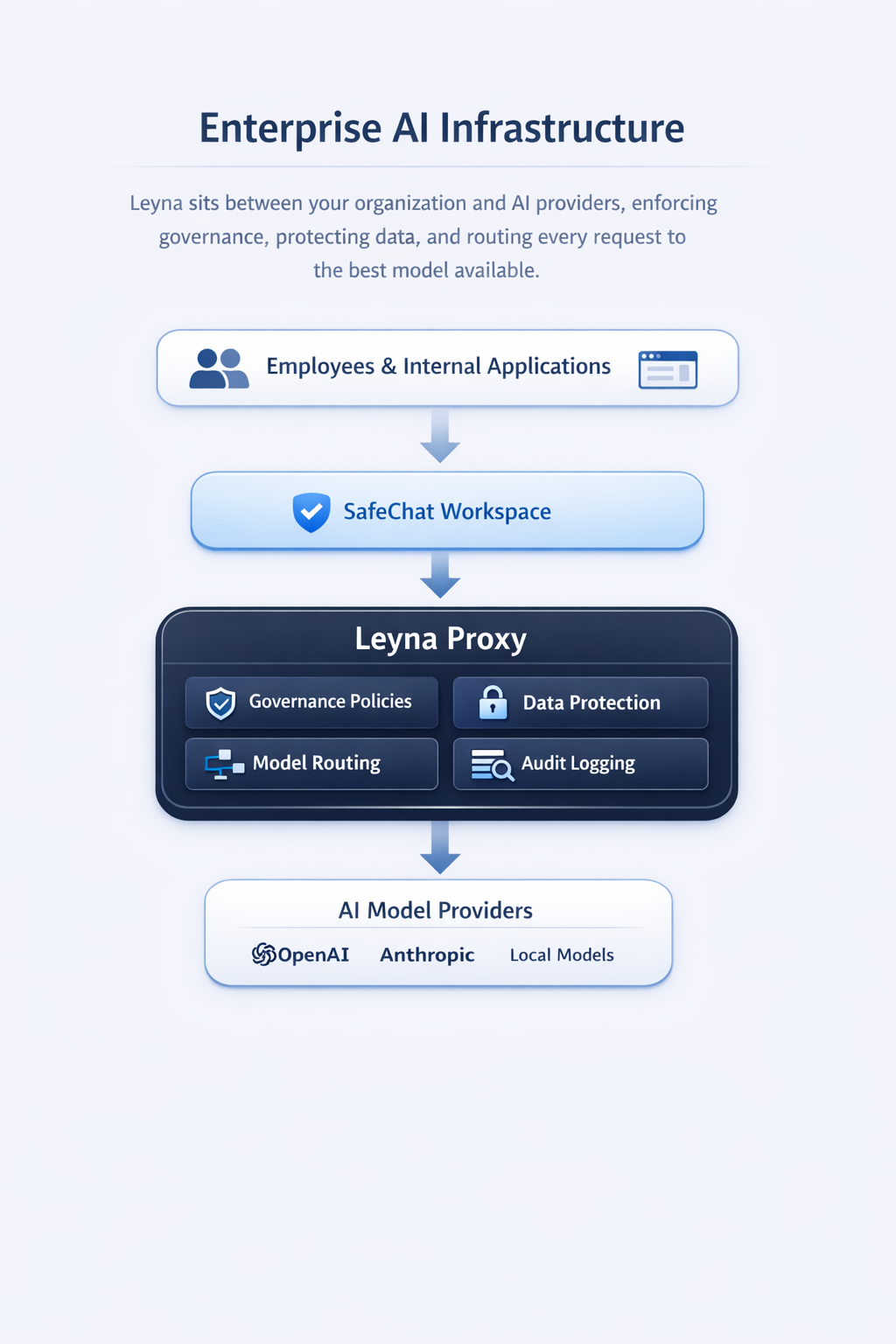

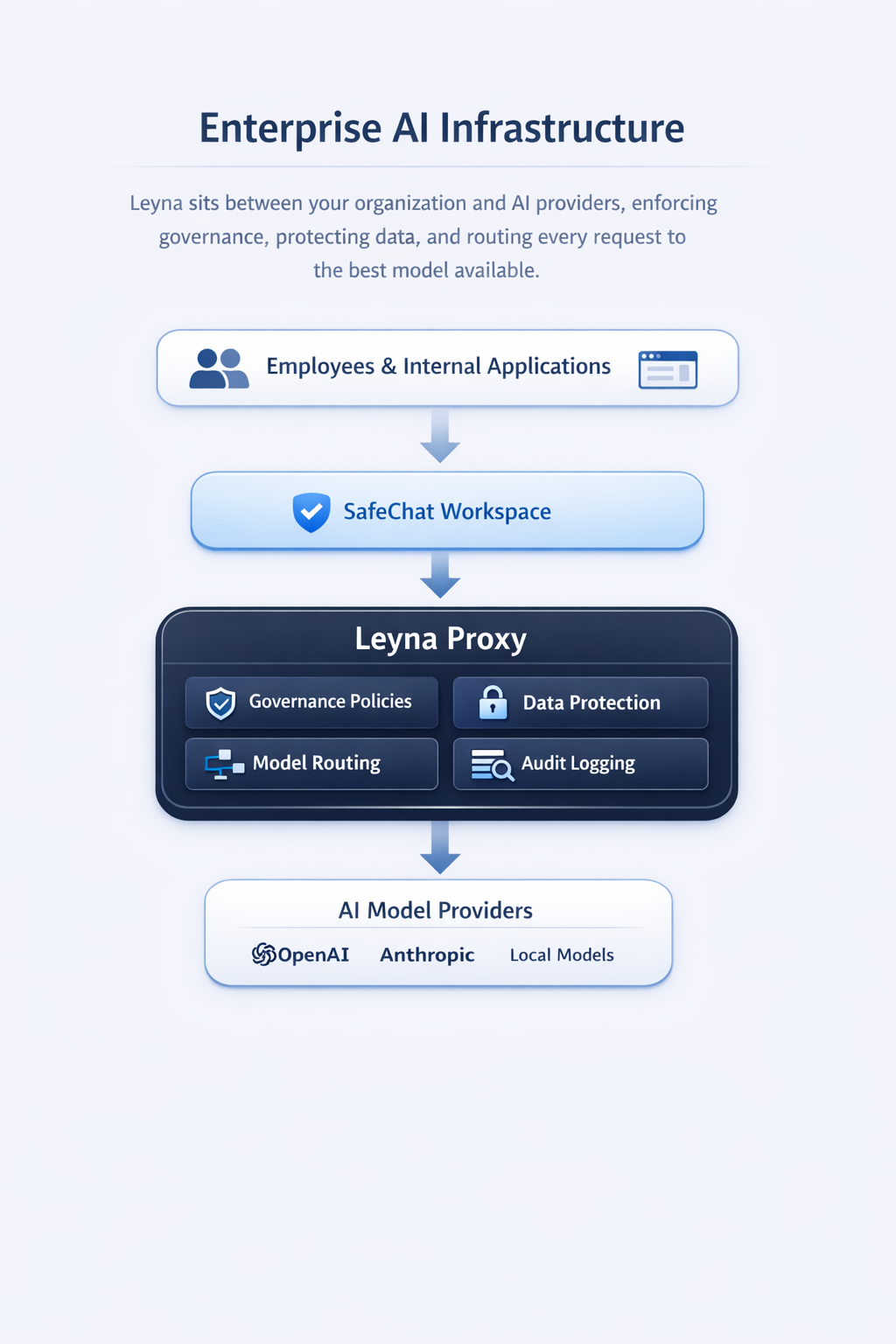

Leyna gives organizations one control boundary for AI across teams, applications, and model providers with policy enforcement, auditability, security controls, and deployment flexibility.

Built for companies where AI adoption is already happening, but security, compliance, and architecture complexity are blocking broader rollout.

What Leyna Delivers

Leyna is designed for the point where experimentation has already started, but uncontrolled usage, data exposure, and vendor sprawl begin to create risk.

Leyna centralizes the controls organizations need to deploy AI safely across teams, workflows, and model providers.

Enforce organization-wide AI policies for approved models, usage patterns, business units, and data classes.

Protect sensitive data with redaction, routing restrictions, access controls, and private-model policies.

Track prompts, outputs, model selection, provider usage, and policy decisions from one operational layer.

Use external and local models through one control plane without binding governance to a single AI vendor.

Leyna sits between internal applications, employee tools, customer-facing workflows, and the model layer so policy can be enforced before every request leaves the organization boundary.

That makes governance consistent across OpenAI, Anthropic, local models, managed cloud endpoints, and future providers without rebuilding security, logging, and routing logic in each application.

Leyna combines a governed runtime, secure workspace, and administrative control layer so organizations can manage AI usage consistently across the enterprise.

The runtime layer that applies governance, routing, redaction, and provider abstraction before requests reach model endpoints.

A governed environment for employee AI usage under enterprise identity, access, and policy controls.

The operating layer for policies, tenant separation, audit visibility, and governance oversight across the organization.

# Leyna Proxy + Model Brokering

$ leyna proxy start --policies enterprise-default

[READY] Governance and security layer active.

$ leyna policy set --data-class restricted --route local

[ACTIVE] Restricted requests pinned to local models.

$ leyna broker evaluate --use-case "internal-support" --optimize quality,cost,latency

[INFO] Ranking models across OpenAI, Anthropic, Google, and Mistral...

$ leyna broker route --request req_4921

[ROUTE] Claude selected for reasoning quality with redaction policy applied.

[SUCCESS] Request logged, redacted, and policy-audited.

Direct model APIs are enough for experimentation. Enterprise deployment needs operational controls that are difficult to rebuild inside every product and workflow.

Centralize standards for approved models, departments, prompts, and data handling.

Give security, compliance, and architecture teams a clear governance boundary to evaluate.

Apply redaction, routing restrictions, and private deployment patterns for regulated workloads.

Keep the governance layer independent as providers, prices, and model quality continue to change.

Leyna is designed for organizations where AI is already useful to the business and the next constraint is control, security, or deployment complexity.

Leyna is positioned as infrastructure, so the deployment model needs to match enterprise procurement, security posture, and operating constraints.

Fastest path for organizations that want a controlled environment without full on-prem complexity.

Designed for organizations that need stronger network ownership, infrastructure separation, and internal security review alignment.

For regulated workloads that require local models, tighter network boundaries, or staged provider access.

Identity is part of the governance story. Leyna connects with enterprise identity providers through OpenID Connect for SSO, access control, and policy-aligned AI usage.

OneLogin

OIDC / SSO

Microsoft Entra ID

OIDC / SSO

Google Workspace

OIDC / SSO

Auth0

OIDC / SSO

Keycloak

OIDC / SSO

Ping Identity

OIDC / SSO

Okta

OIDC / SSO

JumpCloud

OIDC / SSO

Also supports other OpenID Connect-compliant identity providers.

Direct API usage is fine for experiments. Enterprise deployment requires centralized policy, access governance, logging, routing, and deployment control across multiple teams and model providers.

Supported Model Layer

OpenAI

GPT model family

Anthropic

Claude model family

Gemini model family

xAI

Grok model family

AWS Bedrock

Managed foundation models

NVIDIA NIM

Enterprise inference endpoints

Meta

Llama model family

Mistral AI

Mistral and Mixtral models

Cohere

Command model family

Azure OpenAI

Enterprise Azure-hosted models

The governance layer remains stable even as model providers and deployment choices change. And other provider endpoints and local model deployments can be supported as needed.

Leyna supports teams from initial assessment through deployment, governance setup, and production workflow rollout.

Assess current AI usage, identify risk, align stakeholders, and define the implementation roadmap.

Deploy Leyna in the right control boundary with identity, policy, logging, and provider integrations.

Operationalize governed AI for document workflows, internal assistants, and customer-facing use cases.

The assessment helps your team understand current AI usage, identify control gaps, and define a practical path to secure rollout.

Many enterprise rollouts involve consulting firms, system integrators, or client platform teams. Leyna supports that model with a governance layer designed for collaborative delivery.

Leyna gives organizations a governed runtime, secure workspace, and implementation path to deploy AI safely across teams and workflows.